SDLC Life Cycle Models Explained: The Surprising Frameworks Teams Overlook

This post explains how different SDLC models affect delivery and why choosing the right one matters—especially in legal tech where compliance, risk, and integration are critical. It reviews Waterfall, Agile, Spiral, V, incremental, RAD, and Big Bang, noting when each fits and common pitfalls, and highlights often-overlooked approaches (Spiral, V, contract-driven increments). Practical advice includes heuristics for selecting a model, common mistakes, testing and QA strategies, essential team roles, checklists, a sample contract-automation decision, metrics for success, and tips for switching models. The overall recommendation: favor hybrid iterative delivery paired with explicit risk analysis, testing, automation, and clear communication practices.

SDLC Life Cycle Models Explained: The Surprising Frameworks Teams Overlook

If you've spent any time building software, you know the phrase software development life cycle lands everywhere. But which SDLC life cycle models actually help teams ship reliable products, and which ones end up gathering dust in process documents? In my experience, teams often overuse one model and ignore others that could better fit their problem. That is especially true in legal tech, where requirements can be strict, stakeholders are risk-averse, and compliance matters as much as features.

In this post I walk through the common SDLC life cycle models, highlight the ones people miss, and give practical advice for applying each model to legal technology projects. Expect clear, conversational writing, small real-world examples, and tips you can use on your next contract automation or e-discovery project.

Why SDLC models still matter

At a surface level, a software development life cycle model is just a way to organize work. But the model you pick affects communication, budget, testing, and how quickly you can respond to change. Pick the wrong model and you’ll waste time, money, and goodwill. Pick a model that matches the project and the team, and you’ll ship faster with fewer surprises.

I learned this the hard way on a contract management project for a law firm. We started with a rigid plan, then found requirements changing every week as partners discovered edge cases. We switched to a more iterative approach and immediately reduced rework. That switch saved weeks of effort.

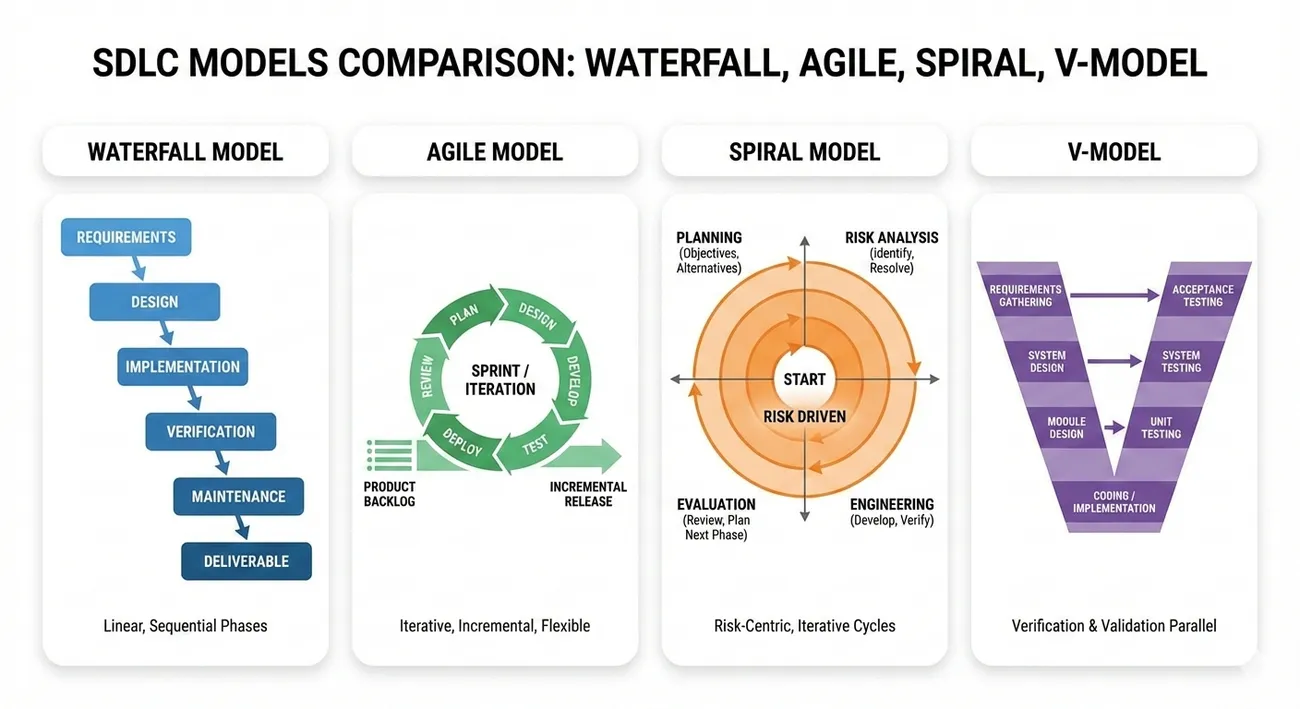

Top SDLC life cycle models to know

Below are the main models you’ll see in the wild. I include when they make sense, common pitfalls, and a simple legal tech example so you can picture how each model plays out in practice.

Waterfall model

What it is: Waterfall is linear and sequential. Teams finish one phase completely before moving to the next. Typical phases are requirements, design, implementation, testing, deployment, and maintenance.

When to use it: Waterfall works when requirements are stable and well understood. If you are building a rules-based compliance checklist where regulations are fixed, a waterfall plan can keep everyone aligned.

Common pitfalls: Waterfall assumes little or no change. In legal tech, that rarely holds for user-facing features. Also, testing happens late. Discovering major flaws after implementation is expensive and demoralizing.

Simple example: Build a regulation compliance engine where the rules are published and not expected to change for months. Waterfall helps define specs up front, then execute.

Agile model

What it is: Agile breaks work into short iterations, often called sprints. Teams deliver small increments of working software, gather feedback, and adapt. Popular frameworks include Scrum and Kanban.

When to use it: Choose Agile when requirements will evolve, stakeholders want early demos, or you need frequent course corrections. Legal tech projects with heavy user interaction, like a negotiation platform, do well with Agile.

Common pitfalls: Agile can become chaotic without discipline. Lack of a clear product backlog, inconsistent sprint goals, and skipping retrospectives are common mistakes. Also, stakeholders who expect a finished product every sprint can be disappointed if the increments don't match expectations.

Simple example: Build a clause library for lawyers. Deliver a basic search feature in the first sprint, then add tagging, suggestions, and analytics in later sprints. That way you learn what lawyers actually use.

Spiral model

What it is: The Spiral model focuses on repeated cycles of planning, risk analysis, engineering, and evaluation. It combines iterative development with explicit risk management.

When to use it: Spiral is great for projects with high uncertainty and significant risks. If you are building a system that integrates with sensitive client databases, or involves novel AI models for contract analysis, Spiral helps you tackle risks step by step.

Common pitfalls: Spiral requires discipline in risk assessment. Teams unfamiliar with formal risk analysis treat it like extra paperwork. That defeats the purpose.

Simple example: Create an AI-powered clause extraction tool. First cycle prototype the model, assess privacy and accuracy risks, then iterate. Each cycle reduces the biggest risks.

V model

What it is: The V model is a variation of Waterfall that treats testing and validation as equal partners to development. Each development phase maps to a corresponding testing phase.

When to use it: Use the V model when verification and validation are critical. Legal tech systems that must meet strict audit trails, access controls, and regulatory reporting benefit from the V model.

Common pitfalls: People assume the V model is just rigid testing. But it actually forces you to think about testability early. The mistake is skipping detailed test planning and then expecting tests to be quick at the end.

Simple example: Build an e-filing system where audit logs, signatures, and compliance checks must be validated. Define tests alongside requirements, then implement and validate step by step.

Incremental and iterative models

What it is: Iterative development builds the system in repeated cycles, adding functionality each time. Incremental development divides the system into components delivered in chunks.

When to use it: These models are flexible. Use them when you want early value and can partition the system into useful pieces. They fit legal tech projects that can be delivered module by module, like a search module first, then a review module, then a reporting module.

Common pitfalls: Poor integration planning leads to messy systems. Teams deliver increments that don’t connect cleanly. Plan interfaces and integration tests early.

Simple example: Deliver a first increment that supports search and tag. Second increment adds redaction and annotations. The user gets value from day one.

Rapid Application Development (RAD) and prototyping

What it is: RAD emphasizes quick prototypes, user feedback, and iterative refinement. The idea is to build a working mockup fast and learn from it.

When to use it: RAD is useful when user experience matters and requirements are fuzzy. Legal tech UX is tricky. If lawyers hate the interface, adoption stalls. Prototypes help avoid that.

Common pitfalls: Prototypes can become permanent if not refactored properly. Teams sometimes skip cleaning up prototype code, creating technical debt.

Simple example: Prototype a document review workflow. Put dummy data, ask lawyers to walk through common tasks, then refine the design before building the full system.

Big Bang model

What it is: Big Bang means you throw resources at the project and hope things come together. There is little planning and no formal model.

When to use it: Honestly, rarely. Big Bang might fit tiny one-off projects or throwaway experiments, but I rarely recommend it for legal tech where failure has real costs.

Common pitfalls: High risk of missed requirements, poor quality, and wasted effort. Few teams succeed without strong leadership and obsessive coordination.

Surprising frameworks teams overlook

Everyone knows Waterfall and Agile. But a few models deserve more attention, especially in legal tech. These frameworks balance predictability and flexibility or emphasize risk in ways that suit regulated projects.

Spiral model revival

Why teams miss it: Spiral looks heavy because it asks you to do formal risk analysis. Many teams skip it because they think Agile handles everything.

Why it matters for legal tech: Legal systems often have high-stakes risks like privacy, compliance, and model fairness. Spiral helps you identify those risks early and test assumptions in small cycles.

Practical tip: Use Spiral when integrating third-party data, deploying ML models that affect legal outcomes, or when the project has unclear legal constraints. Do short risk workshops and treat findings as deliverables.

V model in compliance-first projects

Why teams miss it: The V model looks old school. People assume it is the same as Waterfall with prettier diagrams.

Why it matters for legal tech: The V model forces you to design test plans alongside requirements. For audit-heavy features, that alignment saves time and prevents surprise noncompliance during audits.

Practical tip: Pair requirement owners with test engineers during the requirements phase. Define acceptance criteria as test cases. It sounds obvious but it rarely happens.

Incremental plus contract-driven interfaces

Why teams miss it: Many teams build increments without thinking about contracts between modules. That leads to fragile integrations.

Why it matters for legal tech: Legal systems often stitch together multiple services: a rules engine, a document store, an identity provider. Defining clear contracts between these systems reduces integration risk.

Practical tip: Use API contracts, OpenAPI specs, or consumer-driven contracts when delivering increments. Ship the interface first, then the implementation.

Choosing the right model for legal tech projects

There is no one-size-fits-all answer. Here are practical questions I ask when deciding an SDLC life cycle model for a project. These are quick heuristics I use before a kickoff.

- How stable are the requirements? If stable, lean toward Waterfall or V model. If not stable, choose Agile or iterative models.

- How risky is the project? For high-risk projects use Spiral or V where testing and risk analysis happen early.

- Do you need early user feedback? If yes, prioritize Agile or prototyping approaches.

- How modular is the system? If you can cleanly split features, incremental development reduces time to value.

- What is the team’s discipline level? If the team lacks agility practices, don’t fake Agile; either invest in coaching or pick a simpler model.

Answering these questions reduces the guessing. It’s not perfect, but it gives a strong starting point.

Practical tips and common mistakes

I’ve seen the same mistakes repeated across teams. Here are the ones that matter most, and how to avoid them.

Mistake 1: Choosing a model first, then tailoring requirements to fit

Don't do this. The model should fit the problem, not the other way around. Teams sometimes pick Agile because it sounds modern. Then they shoehorn rigid compliance needs into sprints and burn out. Instead, pick a model based on the project characteristics listed above.

Mistake 2: Ignoring testing until late

Testing is not a checkbox at the end. Whether you use Agile, V or Spiral, design tests early. In my experience teams that write acceptance criteria as test cases reduce rework by half.

Mistake 3: Forgetting integration and operations

People focus on features and forget integration. If you build a contract review engine but never test it with real document stores or authentication providers, you’ll hit deployment roadblocks. Plan integration testing early and automate it.

Mistake 4: Over-optimizing for process

Process exists to help people, not the other way around. Too many policies, too many meetings, and too many templates slow teams down. Keep processes lightweight and continuously adjust them.

Mistake 5: Letting prototypes become production code

I love prototypes. They’re fast and clarify requirements. But leave them as prototypes unless you plan to rework them. Cheap fixes in prototype code become technical debt fast.

How testing and QA differ across models

Testing strategy depends a lot on your model. Here’s a quick comparison so you can plan accordingly.

- Waterfall: Tests are planned and executed after implementation. Works for stable requirements but risks late surprises.

- V model: Tests map to each development phase. This gives structured traceability between requirements and tests.

- Agile: Continuous testing, with unit tests, integration tests, and frequent regression checks. Requires automation investment.

- Spiral: Tests focus on validating risk assumptions each cycle. You validate safety, privacy, and accuracy before scaling up.

In legal tech, invest in automated tests for audit trails, access control, and data handling. Those are the areas auditors will probe first.

Team roles and responsibilities

Models are only useful if people know what to do. Below are roles that make a difference in legal tech projects.

- Product owner or legal domain owner: Someone who understands regulation or legal workflows and can prioritize features.

- Architect: Designs integration and compliance elements early.

- QA and compliance engineers: Pair them with product owners so testing and compliance specs align with requirements.

- DevOps or platform engineer: Automates deployment, monitoring, and security checks.

- Data scientist (if you’re using ML): Focus on model evaluation, fairness, and privacy.

Small teams can combine roles, but never skip the compliance and QA voices. That is a common pitfall in legal tech projects.

Simple templates and checklists to get started

If you want to try a model quickly, here are small checklists you can use at kickoff. I keep these in my head, and often jot them on a whiteboard during the first meeting.

Waterfall quick checklist

- Document requirements in detail and get sign-off.

- Design architecture and interfaces.

- Create a test plan mapped to each requirement.

- Implement, then run tests, then deploy.

Agile quick checklist

- Create a prioritized product backlog with legal domain owner.

- Run 2-week sprints with a demo at the end.

- Automate builds and tests to support continuous integration.

- Hold retrospectives and improve the process.

Spiral quick checklist

- Identify objectives and constraints for the cycle.

- Perform focused risk analysis for the highest risks.

- Prototype or implement small increments to address those risks.

- Evaluate results and plan the next cycle.

Real-world scenario: Picking a model for a contract automation platform

Let me walk you through a simple decision process using a real-world scenario. You are building a contract automation platform for corporate lawyers. The platform will generate contract drafts, apply company standard clauses, and track approvals.

Requirements: lawyers want fast drafts, must comply with internal policies, and need clear audit logs. Risks: incorrect clause selection could lead to liability. Integration: integrates with document storage and single sign-on. Timeline: deliver a minimal working product within four months.

Which model would I pick? I’d choose an iterative Agile approach with Spiral-like cycles for risk. Start with an MVP that handles a small set of clauses and a simple approval flow. Run short risk assessments each sprint to check clause accuracy and audit logging. Keep test cases tied to legal acceptance criteria and automate integration tests. This hybrid approach gives early value while explicitly managing the most important risks.

Measuring success across models

Whatever model you use, measure the right things. Feature count alone is misleading. Here are metrics I care about for legal tech projects.

- Time to meaningful value. How long until users can do useful work?

- Defect rate in production. Issues that affect compliance are weighted heavily.

- User adoption and task completion rates. Do lawyers actually use the system?

- Cycle time for changes. How quickly can you fix or update rules?

- Audit and compliance pass rates. Does the system meet regulatory checks?

These metrics help you tune the process. For example, if defect rates are high after each sprint, invest in test automation or slower releases.

Transitioning between models

Sometimes a project needs to change models midstream. Maybe you started Waterfall but requirements started evolving. How do you migrate gracefully?

- Stop the guessing. Hold a reset session with stakeholders and explain reasons for changing the model.

- Keep documentation that still matters. Requirements and interfaces can be re-used.

- Introduce new ceremonies slowly. If you switch to Agile, start with demos and retrospectives before changing everything.

- Invest in automation early. Continuous integration and deployment make transitions much smoother.

Changing models is disruptive, but often necessary. I find transparent communication and small pilot sprints reduce resistance.

Tooling and automation recommendations

Tools are not magic, but they help. For legal tech projects, focus on tooling that supports traceability, testing, and deployment.

- Version control: Git with clear branching models.

- CI/CD: Automated builds and tests on every commit.

- Testing: Unit tests, integration tests, and end-to-end tests. Use contract testing for service interfaces.

- Issue tracking: Jira, GitHub Issues, or similar with clear workflow definitions.

- Documentation: Keep requirements and test cases in a place where testers, devs, and legal owners can access them.

For projects involving models, add model evaluation pipelines that track accuracy, drift, and performance. Don’t deploy models without monitoring in place.

Final thoughts and recommendations

Choosing an SDLC life cycle model is less about following a label and more about matching the approach to the project's characteristics. For legal tech, the balance between change and compliance is the hard part. In my experience, hybrid approaches that mix iterative delivery with explicit risk and test planning work best.

Start by answering the simple questions in the Choosing the right model section. From there, pick a model that fits. If you need to shift later, do it deliberately and communicate clearly. Finally, invest early in testing, integration, and automation. Those investments pay off when auditors come knocking or when you must update rules fast.

If you want a starting point tomorrow, try this: pick an incremental first delivery that gives users immediate value, build acceptance tests during requirements, and run short risk reviews every other sprint. That combo gets you speed and reduces the chance of embarrassing compliance surprises.

Helpful Links & Next Steps

- Agami - Learn about our work at the intersection of law and technology.

- SDLC Life Cycle Models - Read the original blog on Agami's site.